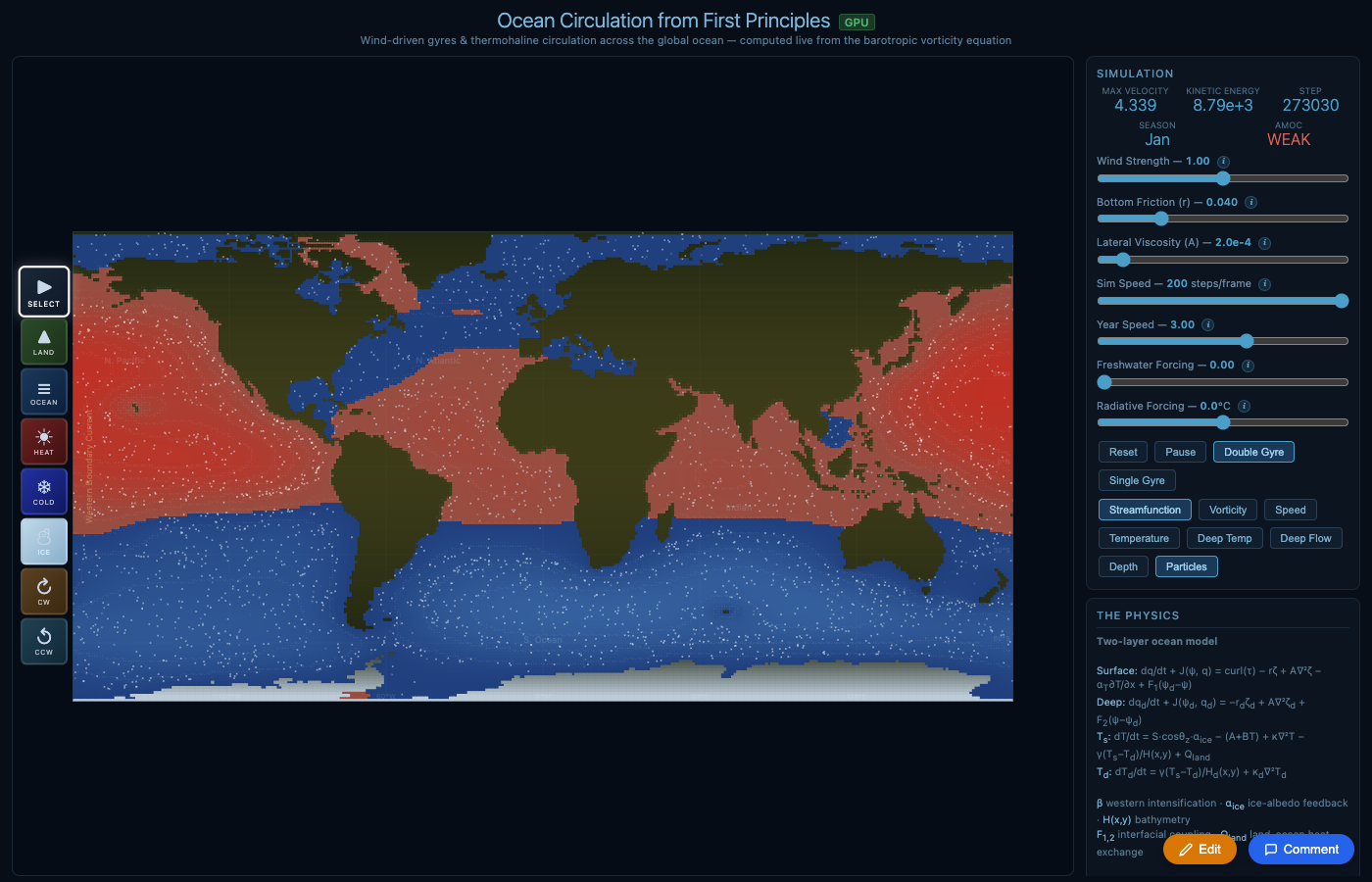

A collaboration between Luke Barrington (original physics engine + GPU compute) and Derek Lomas (salinity, AI loop, data pipeline, clouds, atmosphere, documentation).

Pre-session (Luke Barrington)

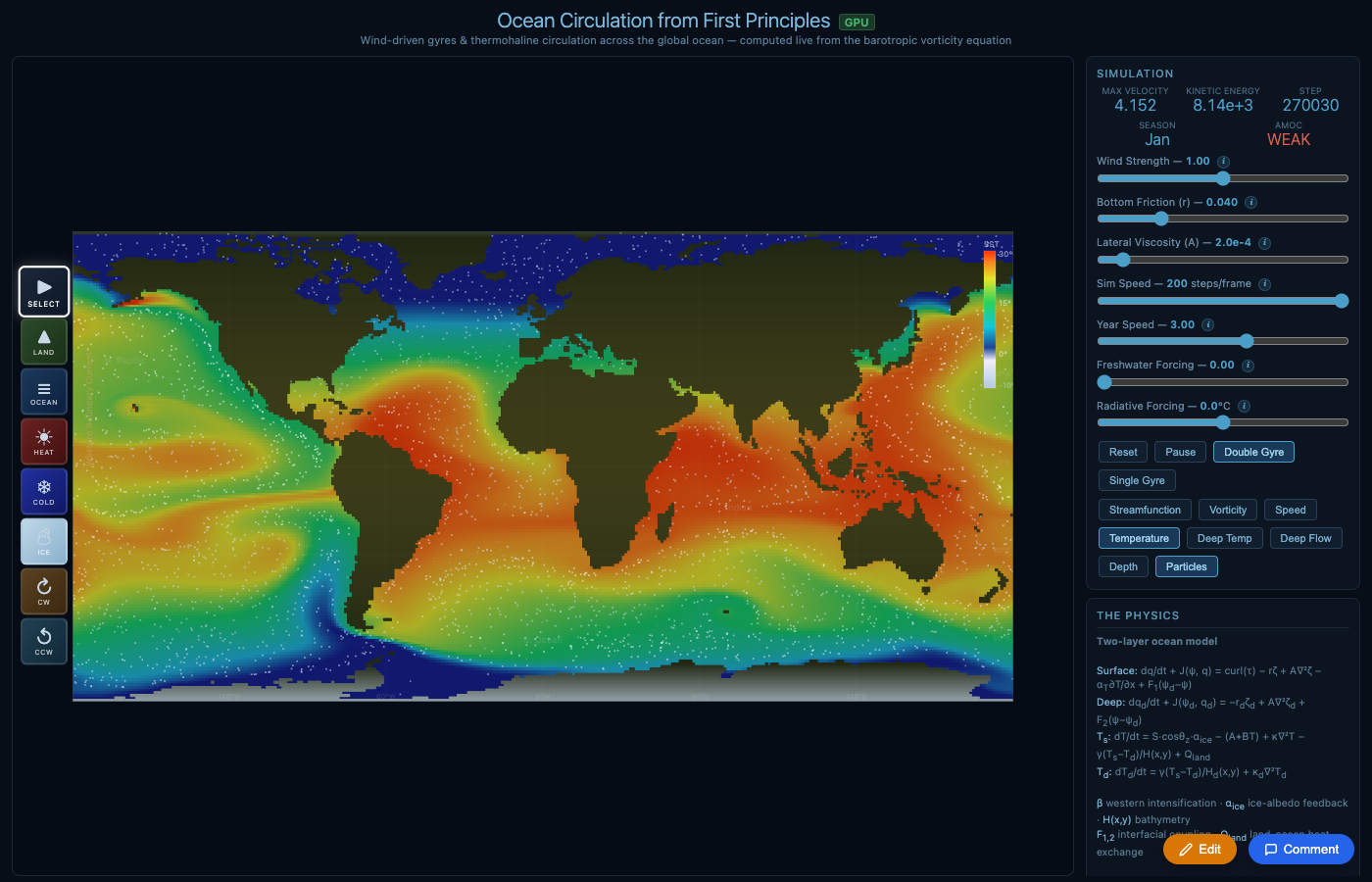

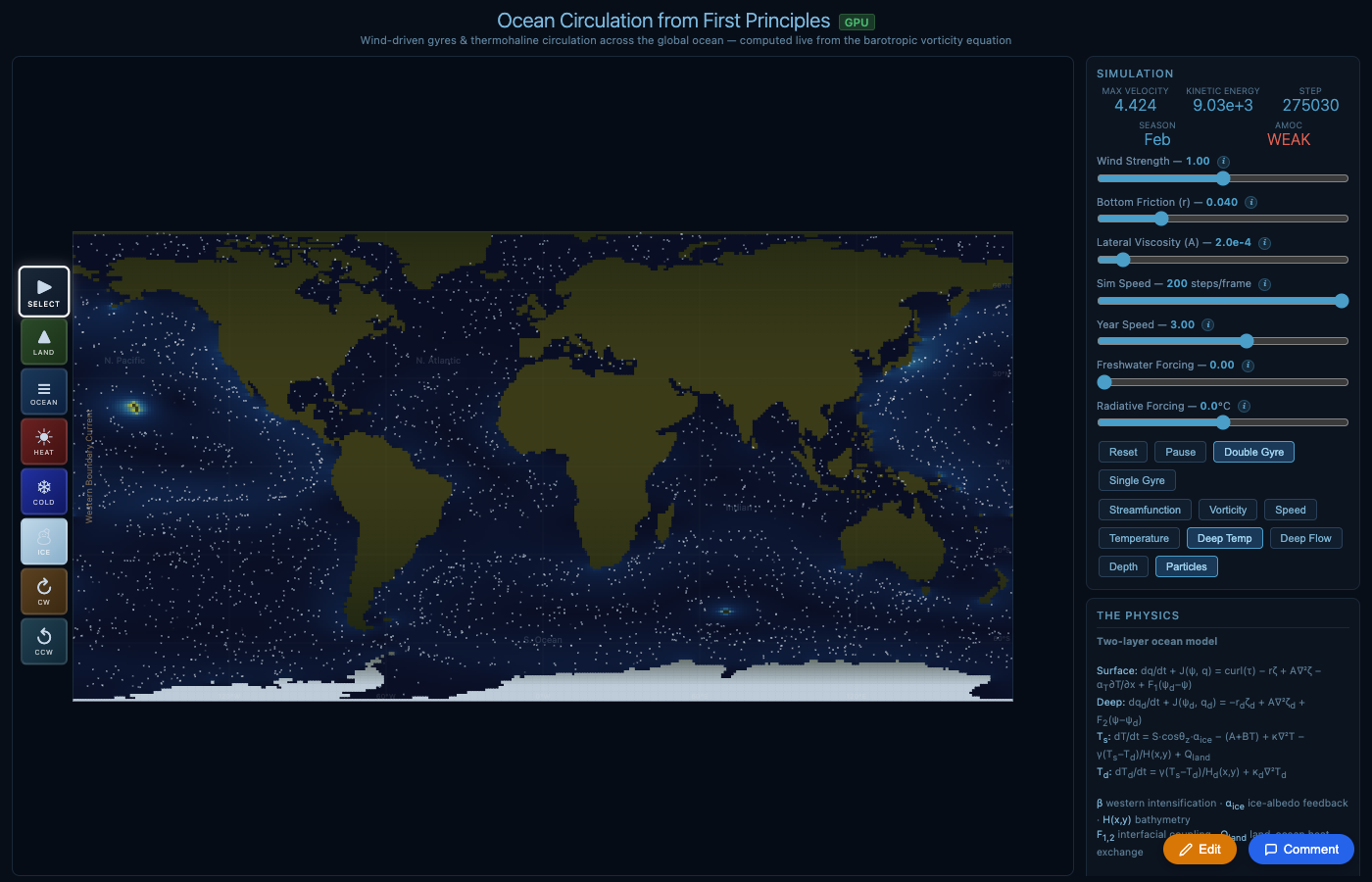

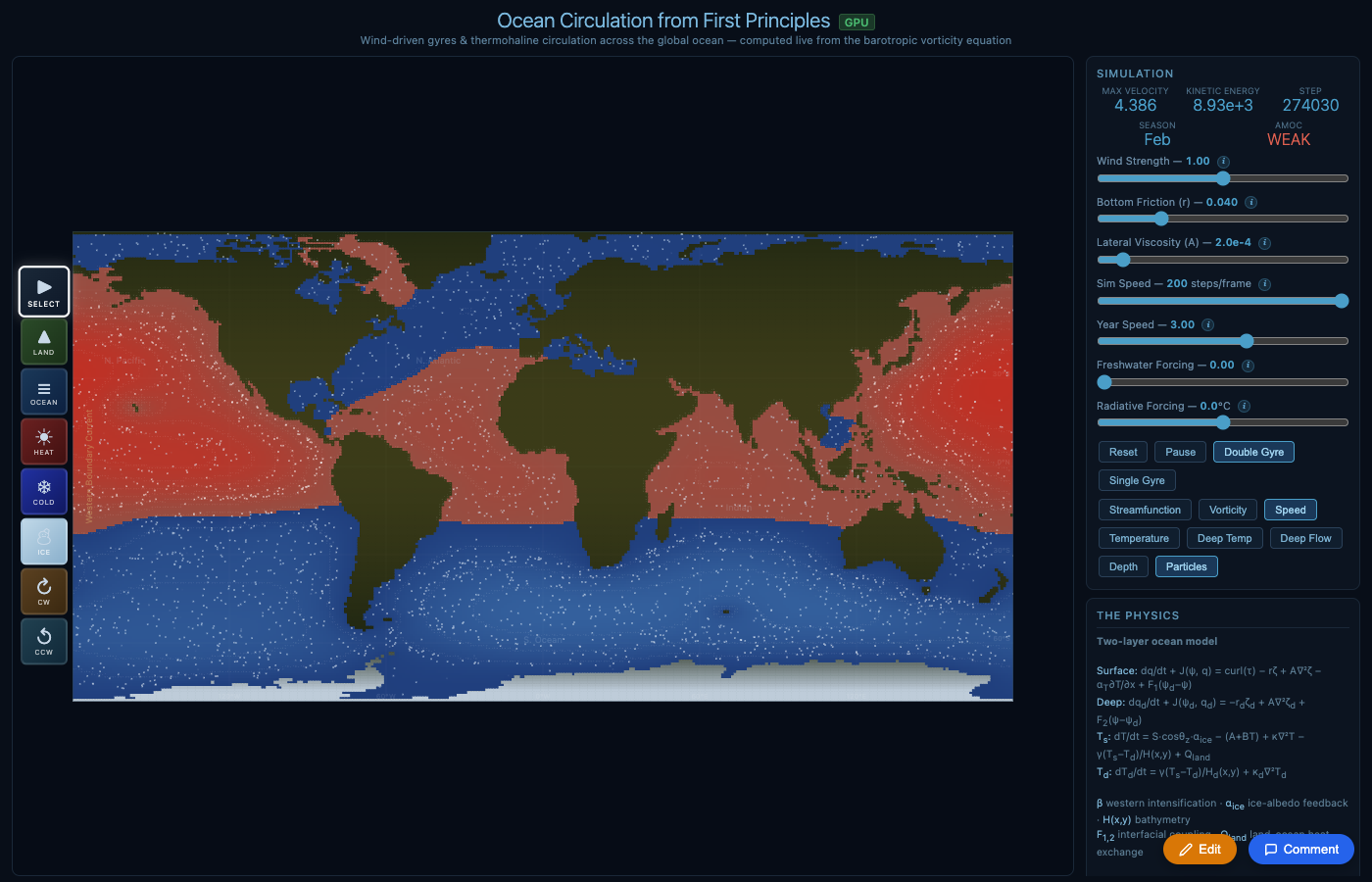

v1-v4: Barotropic vorticity + WebGPU

Wind-driven gyres, western boundary currents, ACC, temperature field. Paleoclimate scenarios, SimEarth-style paint tools, real coastline mask at 1°.

April 21 (Claude + Derek)

Wiggum loop + salinity + real bathymetry

3-agent AI tuning loop, full salinity field with density EOS, ETOPO1 bathymetry, SOR Poisson solver, observed SST initialization. AMOC goes positive for the first time. Freshwater collapses AMOC (Stommel bifurcation live).

RMSE: infinity -> 3.8°C

April 23 (Luke + Derek)

FFT Poisson + Ekman + variable MLD

cos(lat) metric correction, FFT Poisson solver (exact), Ekman heat transport from wind stress, variable mixed layer depth by latitude. AMOC timeseries panel with RAPID reference.

RMSE: 3.8 -> 3.3°C

April 24 (Claude + Derek)

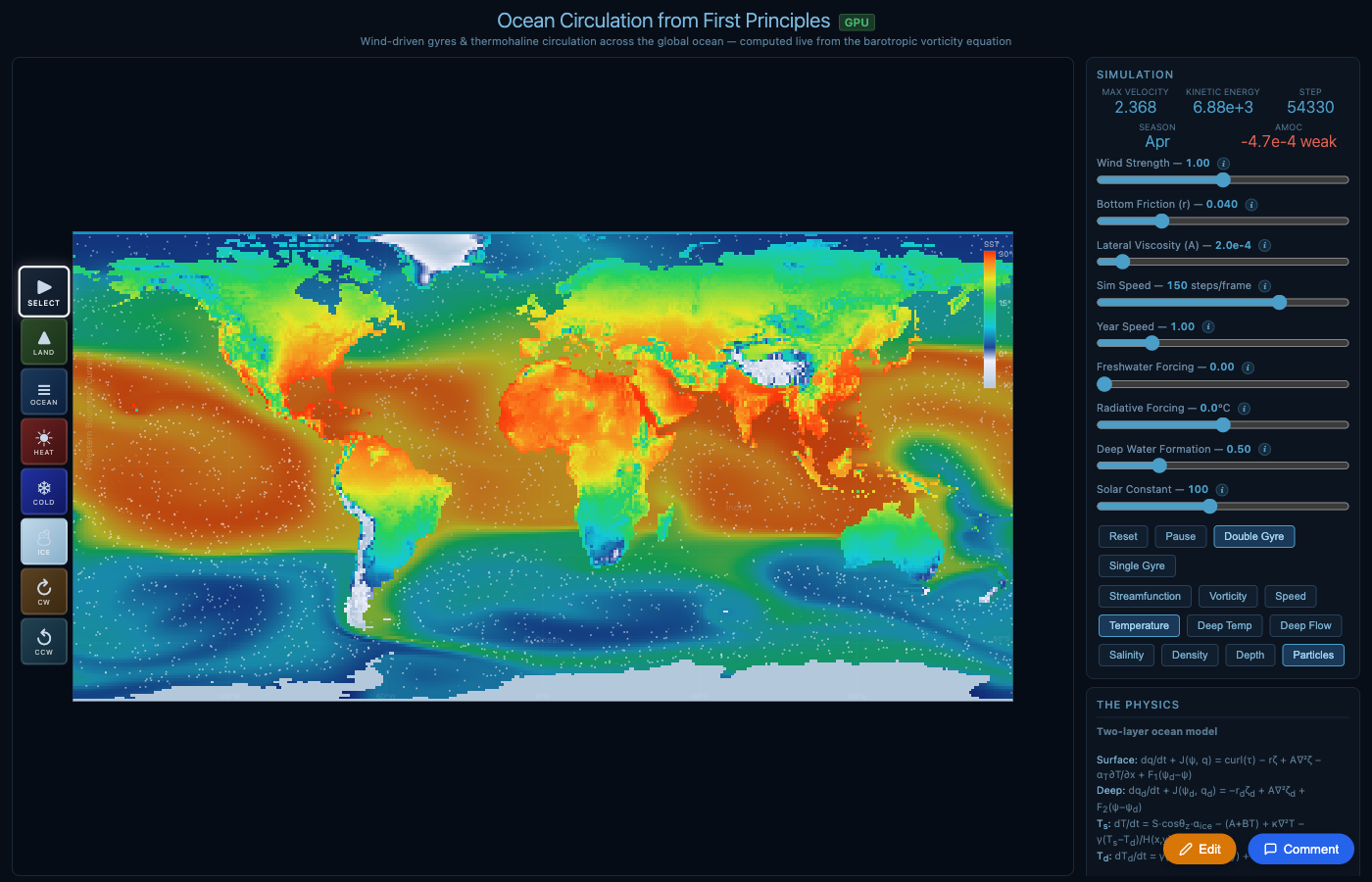

Cloud model + atmosphere + wind stress

7-regime cloud parameterization, 1-layer atmospheric energy balance with two-way coupling, ERA5 wind stress, Southern Ocean cloud fix, MODIS cloud type validation data.

Physics: +clouds, +atmosphere, +moisture

April 25 (Claude + Derek)

Physics registry + system documentation

Complete inventory of every physical process with equations, data sources, status, and known gaps. Interaction map showing all feedback loops and parameter sensitivity chains.

PHYSICS_REGISTRY.md + SYSTEM.md